BUILD a better mousetrap, the saying goes, and the world will beat a path to your door. Find a way to beat the stockmarket and they will construct a high-speed railway. As investors try to achieve this goal, they draw on the work of academics. But in doing so, they are both changing the markets and the way academics understand them.

The idea that financial markets are “efficient†became widespread among academics in the 1960s and 1970s. The hypothesis stated that all information relevant to an asset’s value would instantly be reflected in the price; little point, therefore, in trading on the basis of such data. What would move the price would be future information (news) which, by definition, could not be known in advance. Share prices would follow a “random walkâ€. Indeed, a book called “A Random Walk Down Wall Street†became a bestseller.

The idea helped inspire the creation of index-trackers—funds that simply buy all the shares in a benchmark like the S&P 500. From small beginnings in the 1970s, trackers have been steadily gaining market share. They command around 20% of all assets under management today.

But the efficient-market hypothesis has repeatedly been challenged. When the American stockmarket fell by 23% in a single day in October 1987, it was hard to find a reason why investors should have changed their assumptions so rapidly and substantially about the fair value of equities. Robert Shiller of Yale won a Nobel prize in economics for work showing that the overall stockmarket was far more volatile than it should be if traders were adequately forecasting the fundamental data: the cashflows received by investors.

Another example of theory and practice parting company is in the foreign-exchange market. When Sushil Wadhwani left a hedge fund to join the Bank of England’s monetary policy committee (MPC) in 1999, he was taken aback by the way the bank forecast currency movements. The bank relied on a theory called “uncovered-interest parityâ€, which states that the interest-rate differential between two countries reflects the expected change in exchange rates. In effect, this meant that the forward rate in the currency market was the best predictor of exchange-rate movements.

Mr Wadhwani was surprised by this approach, since he knew many people who used the “carry tradeâ€, ie, borrowing money in a low-yielding currency and investing in a higher-yielding one. If the bank was right, such a trade should be unprofitable. After some debate, the bank agreed on a classic British compromise: it forecast the currency would move half the distance implied by forward rates.

Many who work in finance still believe they can beat the market. After all, there was a potential flaw at the heart of the efficient-market theory. For information to be reflected in prices, there had to be trading. But why would people trade if their efforts were doomed to be unprofitable?

One notion, says Antti Ilmanen, a former academic who now works for AQR, a fund-management company, is that markets are “efficiently inefficientâ€. In other words, the average Joe has no hope of beating the market. But if you devote enough capital and computer power to the effort, you can succeed.

That helps explain the rise of the quantitative investors, or “quantsâ€, who attempt to exploit anomalies—quirks that cannot be explained by the efficient-market hypothesis. One example is the momentum effect: shares that have outperformed the market in the recent past continue to do so. Another is the “low-volatility†effect: shares that move less violently than the market produce better risk-adjusted returns than theory predicts.

A new breed of funds, known in the jargon as “smart betaâ€, have emerged to exploit these anomalies. In a sense these funds are simply trying to mimic, in a systematic way, the methods used by traditional fund managers who interview executives and pore over balance-sheets in an attempt to pick outperforming stocks.

Whether these funds will prosper depends on why the anomalies have been profitable in the past. There are three possibilities. The first is that the anomalies are statistical quirks; interrogate the data for long enough and you may find that stocks outperform on wet Mondays in April. That does not mean they will continue to do so.

The second possibility is that the excess returns are compensation for risk. Smaller companies can deliver outsize returns but their shares are less liquid, and thus more difficult to sell when you need to; the firms are also more likely to go bust. Two academics, Eugene Fama and Kenneth French, have argued that most anomalies can be explained by three factors: a company’s size; its price relative to its assets (the value effect); and its volatility.

The third possibility is that the returns reflect some quirk of behaviour. The outsize returns of momentum stocks may have been because investors were slow to realise that a company’s fortunes had improved. But behaviour can change; Mr Wadhwani says share prices are moving more on the day of earnings announcements, relative to subsequent days, than they were 20 years ago. In other words, investors are reacting faster. The carry trade is also less profitable than it used to be. Mr Ilmanen says it is likely that returns from smart-beta factors will be lower, now that the strategies are more popular.

If markets are changing, so too are the academics who study them. Many modern research papers focus on anomalies or on behavioural quirks that might cause investors to make apparently irrational decisions. The adaptive-markets hypothesis, devised by Andrew Lo of the Massachusetts Institute of Technology, suggests that the market develops in a manner akin to evolution. Traders and fund managers pursue strategies they believe will be profitable; those that are successful keep going; those that lose money, drop out.

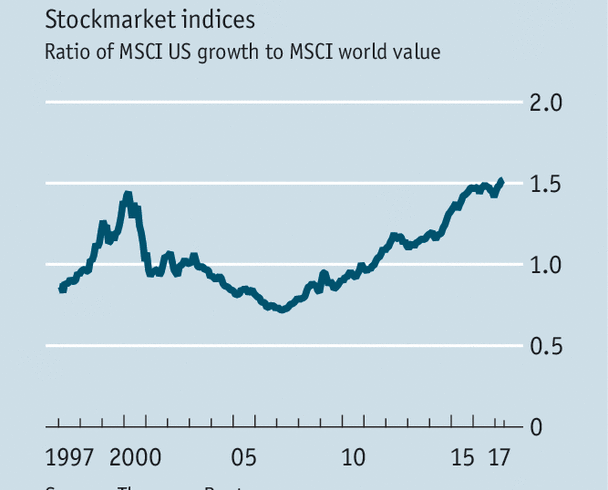

The results can be dramatic. In August 2007 there was a “quant quake†as computerised strategies briefly stopped working; the suspicion was that one manager was offloading his positions after taking losses in the mortgage market. The episode hinted at a danger of the quant approach: if computers are all churning over the same data, they may be buying the same shares. At the moment American growth stocks, such as technology companies, are as expensive, relative to global value stocks, as they were during the dotcom bubble (see chart). What if the trend changes? No mathematical formula, however clever, can find a buyer for a trader’s positions when everyone is panicking.